Windows Memory Mapped File FlushManaging Memory- Mapped FilesÂ. Randy Kath. Microsoft Developer Network Technology Group. Created: February 9, 1. Abstract. Determining which function or set of functions to use for managing memory in your application is difficult without a solid understanding of how each group of functions works and the overall impact they each have on the operating system.

In an effort to simplify these decisions, this technical article focuses on the use of the memory- mapped file functions in Windows: the functions that are available, the way they are used, and the impact their use has on operating system resources. The following topics are discussed in this article.

Introduction to managing memory in Windows operating systems. What are memory- mapped files? How are memory- mapped files implemented? Sharing memory with memory- mapped files. Using memory- mapped file functions. In addition to this technical article, a sample application called Process. Walker is included on the Microsoft Developer Network CD. This sample application is useful for exploring the behavior of memory- mapped files in a process, and it provides several useful implementation examples. Introduction. This is one of three related technical articles—"Managing Virtual Memory," "Managing Memory- Mapped Files," and "Managing Heap Memory"—that explain how to manage memory in applications for Windows. In each article, this introduction identifies the basic memory components in the Windows API programming model and indicates which article to reference for specific areas of interest. The first version of the Windows operating system introduced a method of managing dynamic memory based on a single global heap, which all applications and the system share, and multiple, private local heaps, one for each application. Local and global memory management functions were also provided, offering extended features for this new memory management system. More recently, the Microsoft C run- time (CRT) libraries were modified to include capabilities for managing these heaps in Windows using native CRT functions such as malloc and free. Consequently, developers are now left with a choice—learn the new application programming interface (API) provided as part of Windows or stick to the portable, and typically familiar, CRT functions for managing memory in applications written for Windows. Window offers three groups of functions for managing memory in applications: memory- mapped file functions, heap memory functions, and virtual- memory functions. Memory-mapped files can be shared across multiple processes. Processes can map to the same memory-mapped file by using a common name that is assigned by the process that created the file. To work with a memory-mapped file, you.

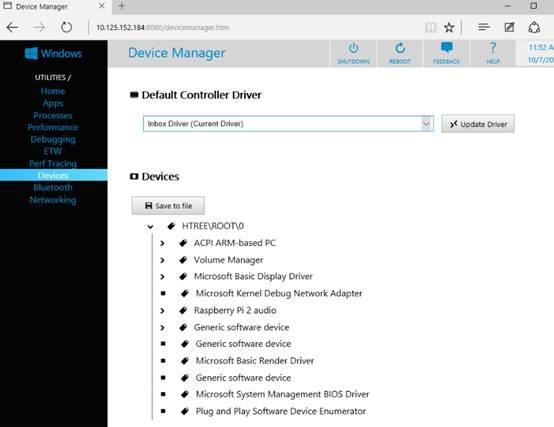

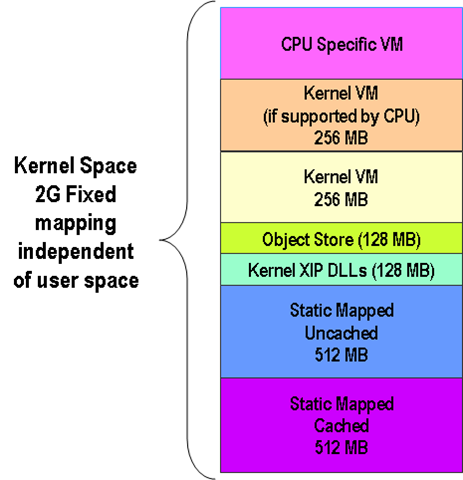

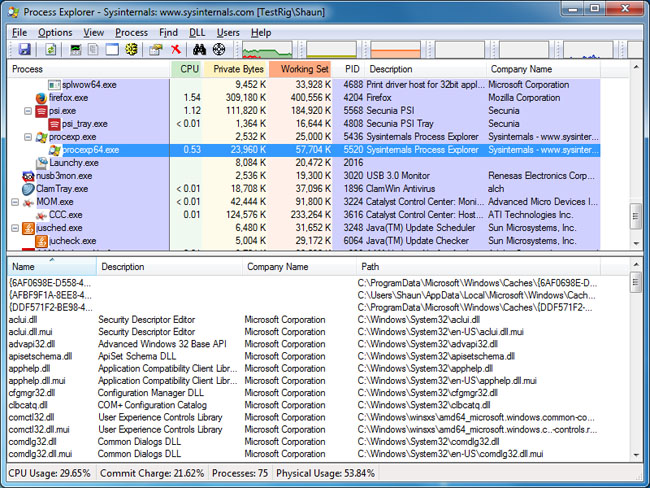

Figure 1. The Windows API provides different levels of memory management for versatility in application programming. In all, six sets of memory management functions exist in Windows, as shown in Figure 1, all of which were designed to be used independently of one another. Memory-Mapped I/O. The default mechanism by which SQLite accesses and updates database disk files is the xRead() and xWrite() methods of the sqlite3_io_methods VFS object. These methods are typically implemented as 'read. Hello everyone! I need your advice with the following problem. I have a small home server running WS 2012. On it I have a VM that works as a File server and has on of its disks(vhdx) shared. On that file share I keep my iTunes. Download sample projects and source code - 18.1 KB; Introduction. First of all, what is a memory-mapped file or MMF? MMF is a kernel object that maps a disk file to a region of memory address space as the committed physical. A memory-mapped file is a segment of virtual memory that has been assigned a direct byte-for-byte correlation with some portion of a file or file-like resource. This resource is typically a file that is physically present on. Focuses on the use of the memory-mapped file functions: the functions that are available, the way they are used, and the impact their use has on operating system resources. Memory-mapped I/O (MMIO) and port-mapped I/O (PMIO) (which is also called isolated I/O) are two complementary methods of performing input/output (I/O) between the CPU and peripheral devices in a computer. An alternative. If you're talking about Windows, the main roadblock is that processes each live in their own virtual address space. You unfortunately can't pass normal memory addresses around from process to process and get the results you'd. So which set of functions should you use? The answer to this question depends greatly on two things: the type of memory management you want and how the functions relevant to it are implemented in the operating system. In other words, are you building a large database application where you plan to manipulate subsets of a large memory structure? Or maybe you're planning some simple dynamic memory structures, such as linked lists or binary trees? In both cases, you need to know which functions offer the features best suited to your intention and exactly how much of a resource hit occurs when using each function. Windows Memory Mapped File CacheTable 1 categorizes the memory management function groups and indicates which of the three technical articles in this series describes each group's behavior. Each technical article emphasizes the impact these functions have on the system by describing the behavior of the system in response to using the functions. Table 1. Various Memory Management Functions Available. Memory set. System resource affected. Related technical article. Virtual memory functions. A process's virtual address space. System pagefile. System memory. Hard disk space"Managing Virtual Memory"Memory- mapped file functions. A process's virtual address space. System pagefile. Standard file I/OSystem memory. Hard disk space"Managing Memory- Mapped Files"Heap memory functions. A process's virtual address space. System memory. Process heap resource structure"Managing Heap Memory"Global heap memory functions. A process's heap resource structure"Managing Heap Memory"Local heap memory functions. A process's heap resource structure"Managing Heap Memory"C run- time reference library.

A process's heap resource structure"Managing Heap Memory"Each technical article discusses issues surrounding the use of Windows- specific functions. What Are Memory- Mapped Files? Memory- mapped files (MMFs) offer a unique memory management feature that allows applications to access files on disk in the same way they access dynamic memory—through pointers. With this capability you can map a view of all or part of a file on disk to a specific range of addresses within your process's address space. And once that is done, accessing the content of a memory- mapped file is as simple as dereferencing a pointer in the designated range of addresses. So, writing data to a file can be as simple as assigning a value to a dereferenced pointer as in: Similarly, reading from a specific location within the file is simply: In the above examples, the pointer p. Mem represents an arbitrary address in the range of addresses that have been mapped to a view of a file. Each time the address is referenced (that is, each time the pointer is dereferenced), the memory- mapped file is the actual memory being addressed. Note   While memory- mapped files offer a way to read and write directly to a file at specific locations, the actual action of reading/writing to the disk is handled at a lower level. Consequently, data is not actually transferred at the time the above instructions are executed. Instead, much of the file input/output (I/O) is cached to improve general system performance. You can override this behavior and force the system to perform disk transactions immediately by using the memory- mapped file function Flush. View. Of. File explained later. What Do Memory- Mapped Files Have to Offer? One advantage to using MMF I/O is that the system performs all data transfers for it in 4. K pages of data. Internally all pages of memory are managed by the virtual- memory manager (VMM). It decides when a page should be paged to disk, which pages are to be freed for use by other applications, and how many pages each application can have out of the entire allotment of physical memory. Since the VMM performs all disk I/O in the same manner—reading or writing memory one page at a time—it has been optimized to make it as fast as possible. Limiting the disk read and write instructions to sequences of 4. K pages means that several smaller reads or writes are effectively cached into one larger operation, reducing the number of times the hard disk read/write head moves. Reading and writing pages of memory at a time is sometimes referred to as paging and is common to virtual- memory management operating systems. Another advantage to using MMF I/O is that all of the actual I/O interaction now occurs in RAM in the form of standard memory addressing. Meanwhile, disk paging occurs periodically in the background, transparent to the application. While no gain in performance is observed when using MMFs for simply reading a file into RAM, other disk transactions can benefit immensely. Say, for example, an application implements a flat- file database file structure, where the database consists of hundreds of sequential records. Accessing a record within the file is simply a matter of determining the record's location (a byte offset within the file) and reading the data from the file. Then, for every update, the record must be written to the file in order to save the change. For larger records, it may be advantageous to read only part of the record into memory at a time as needed. Unfortunately, though, each time a new part of the record is needed, another file read is required. The MMF approach works a little differently. When the record is first accessed, the entire 4. K page(s) of memory containing the record is read into memory. All subsequent accesses to that record deal directly with the page(s) of memory in RAM. No disk I/O is required or enforced until the file is later closed or flushed. Note   During normal system paging operations, memory- mapped files can be updated periodically. If the system needs a page of memory that is occupied by a page representing a memory- mapped file, it may free the page for use by another application. If the page was dirty at the time it was needed, the act of writing the data to disk will automatically update the file at that time. A dirty page is a page of data that has been written to, but not saved to, disk; for more information on types of virtual- memory pages, see "The Virtual- Memory Manager in Windows NT" on the Developer Network CD.) The flat- file database application example is useful in pointing out another advantage of using memory- mapped files. MMFs provide a mechanism to map portions of a file into memory as needed. This means that applications now have a way of getting to a small segment of data in an extremely large file without having to read the entire file into memory first. Using the above example of a large flat- file database, consider a database file housing 1,0. The file size necessary to store this database would be 1,0. To read a file that large would require an extremely large amount of memory. With MMFs, the entire file can be opened (but at this point no memory is required for reading the file) and a view (portion) of the file can be mapped to a range of addresses. Then, as mentioned above, each page in the view is read into memory only when addresses within the page are accessed. How Are They Implemented? Sharing memory between two processes (C, Windows)Although windows supports shared memory through its file mapping API, you can't easily inject a shared memory mapping into another process directly, as Map. View. Of. File. Ex does not take a process argument. However, you can inject some data by allocating memory in another process using Virtual. Alloc. Ex and Write. Process. Memory. If you were to copy in a handle using Duplicate. Handle, then inject a stub which calls Map. View. Of. File. Ex, you could establish a shared memory mapping in another process. Since it sounds like you'll be injecting code anyway, this ought to work well for you. To summarize, you'll need to: Create an anonymous shared memory segment handle by calling Create. File. Mapping with INVALID_HANDLE_VALUE for h. File and NULL for lp. Name. Copy this handle into the target process with Duplicate. Handle. Allocate some memory for code using Virtual. Alloc. Ex, with fl. Allocation. Type = MEM_COMMIT | MEM_RESERVE and fl. Protect = PAGE_EXECUTE_READWRITEWrite your stub code into this memory, using Write. Process. Memory. This stub will likely need to be written in assembler. Pass the HANDLE from Duplicate. Handle by writing it in here somewhere. Execute your stub using Create. Remote. Thread. The stub must then use the HANDLE it obtained to call Map. View. Of. File. Ex. The processes will then have a common shared memory segment. You may find it a bit easier if your stub loads an external library - that is, have it simply call Load. Library (finding the address of Load. Library is left as an exercise to the reader) and do your work from the library's dllmain entry point. In this case using named shared memory is likely to be simpler than futzing around with Duplicate. Handle. See the MSDN article on Create. File. Mapping for more details, but, essentially, pass INVALID_HANDLE_VALUE for h. File and a name for lp. Name. Edit: Since your problem is passing data and not actual code injection, here are a few options. Use variable- sized shared memory. Your stub gets the size and either the name of or a handle to the shared memory. This is appropriate if you need only exchange data once. Note that the size of a shared memory segment cannot be easily changed after creation. Use a named pipe. Your stub gets the name of or a handle to the pipe. You can then use an appropriate protocol to exchange variable- sized blocks - for example, write a size_t for length, followed by the actual message. Or use PIPE_TYPE_MESSAGE and PIPE_READMODE_MESSAGE, and watch for ERROR_MORE_DATA to determine where messages end. This is appropriate if you need to exchange data multiple times. Edit 2: Here's a sketch of how you might implement handle or pointer storage for your stub. B8 ; ; mov eax, imm. You could also just use a fixed name, which is probably simpler.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

October 2016

Categories |

RSS Feed

RSS Feed